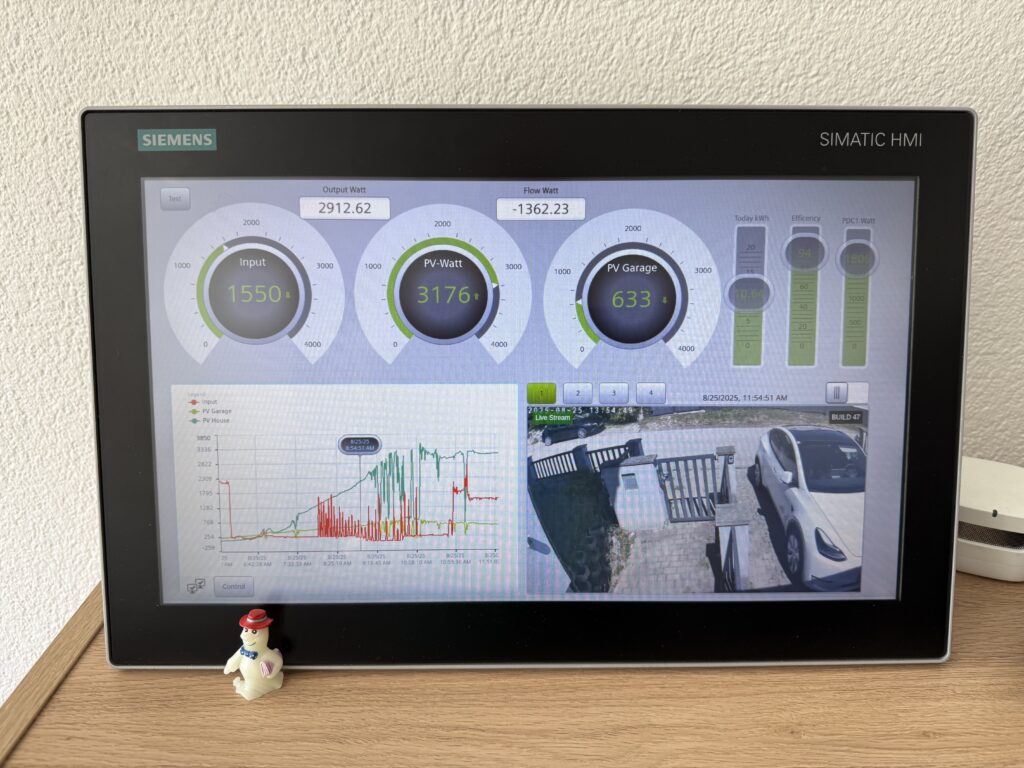

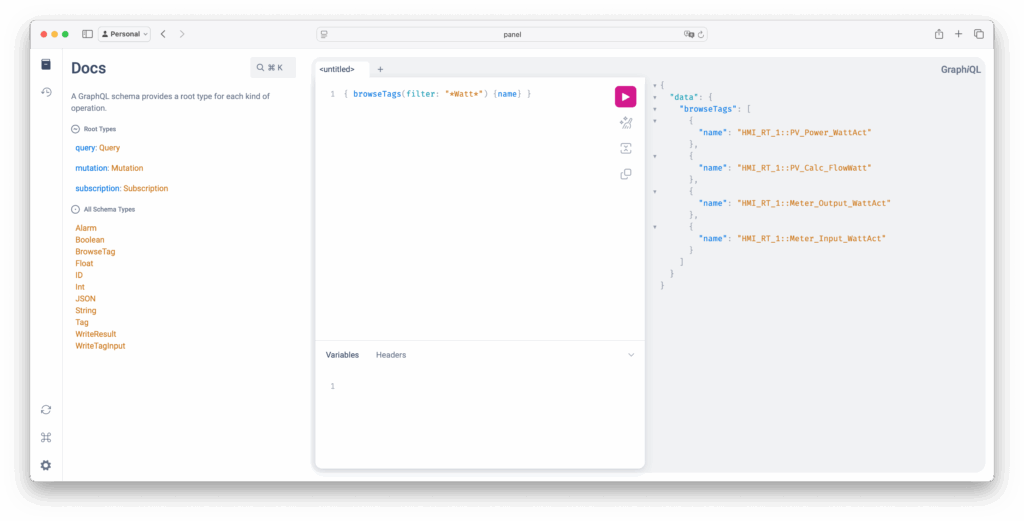

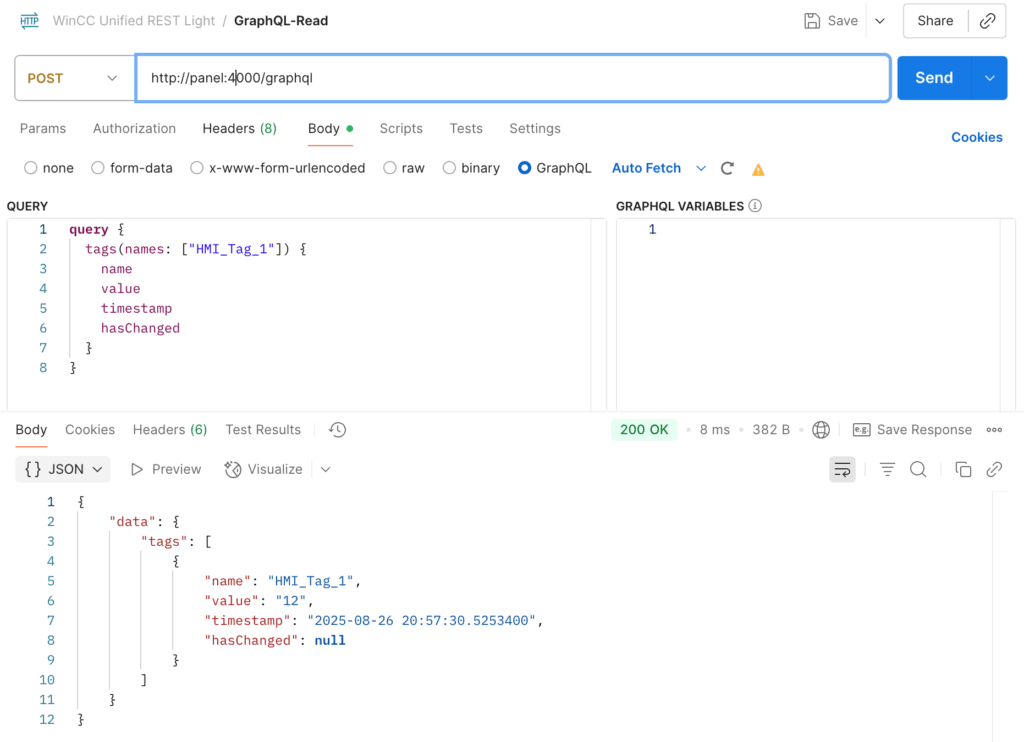

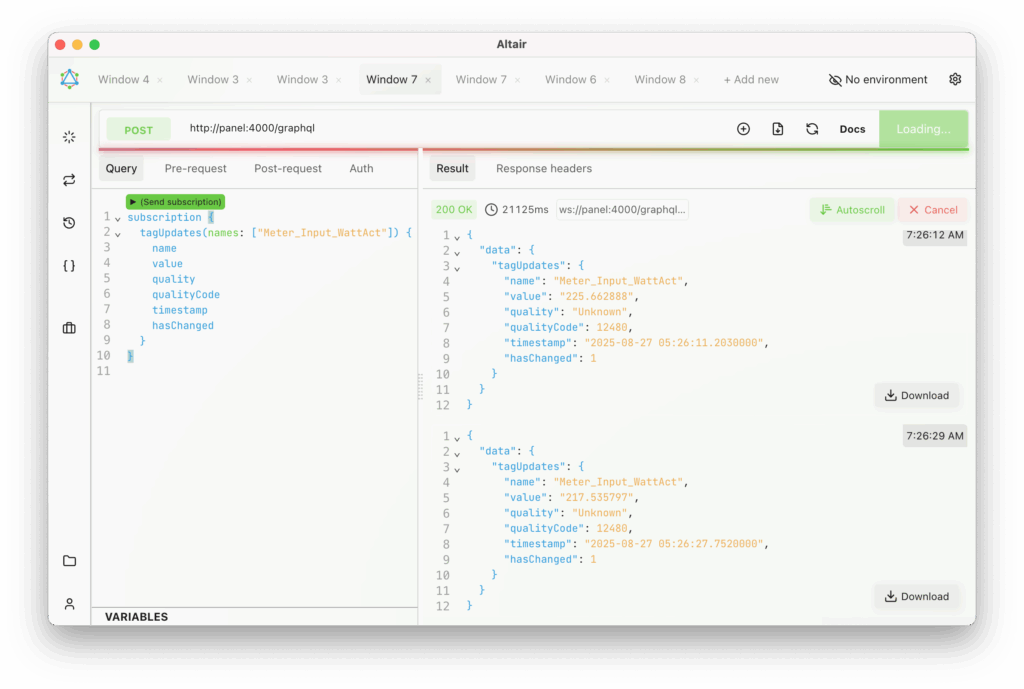

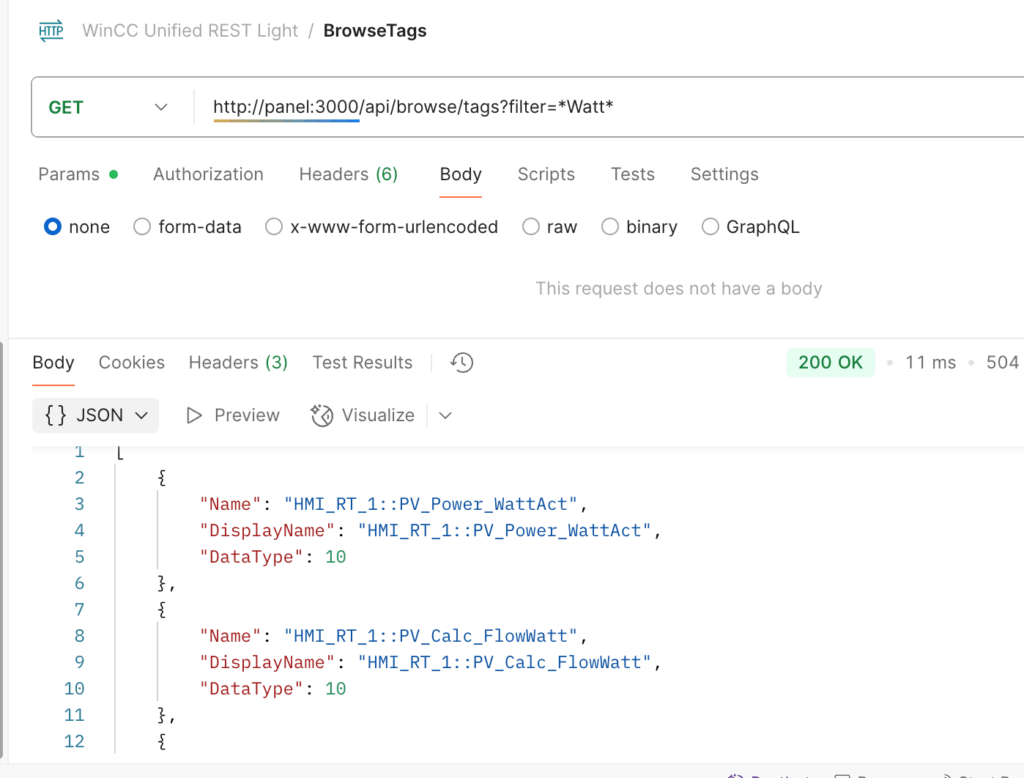

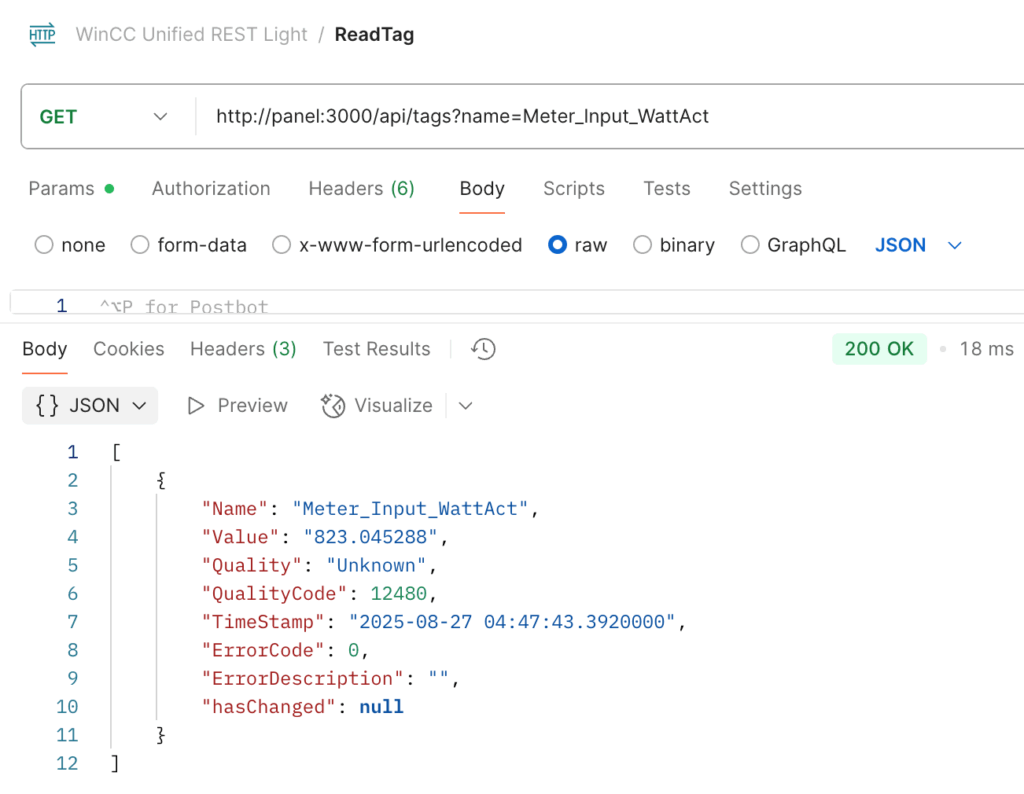

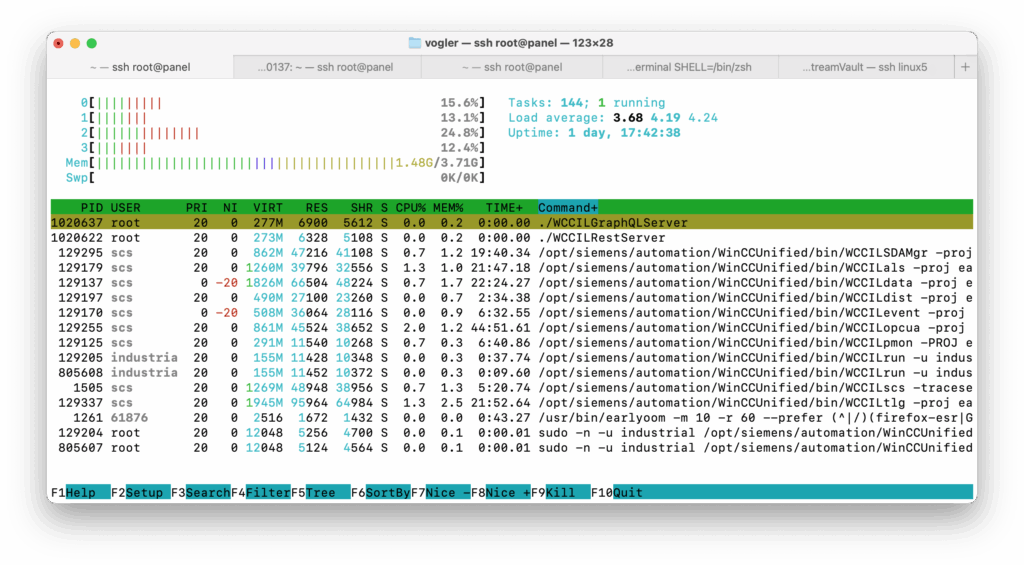

In the newest version, MonsterMQ can now receive tags, events, and alerts directly from WinCC Open Architecture and WinCC Unified.

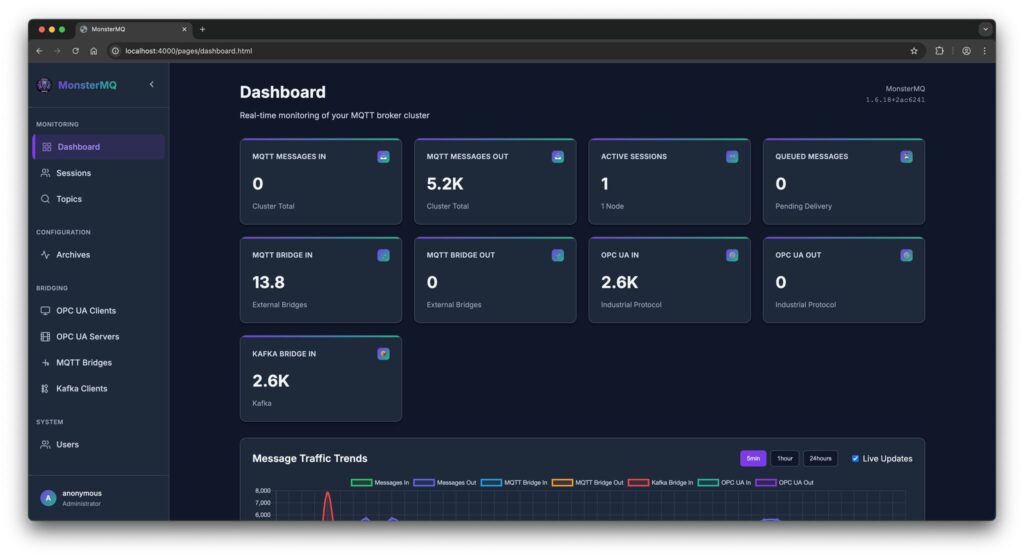

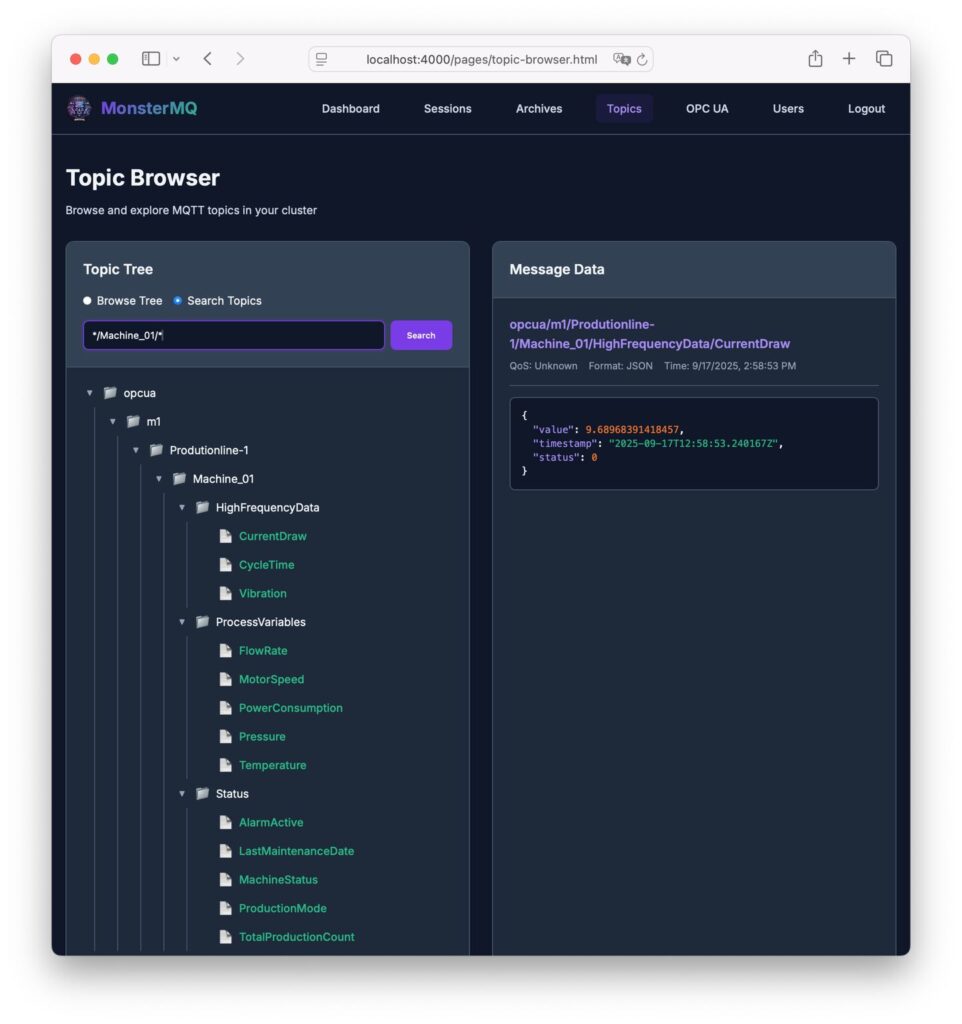

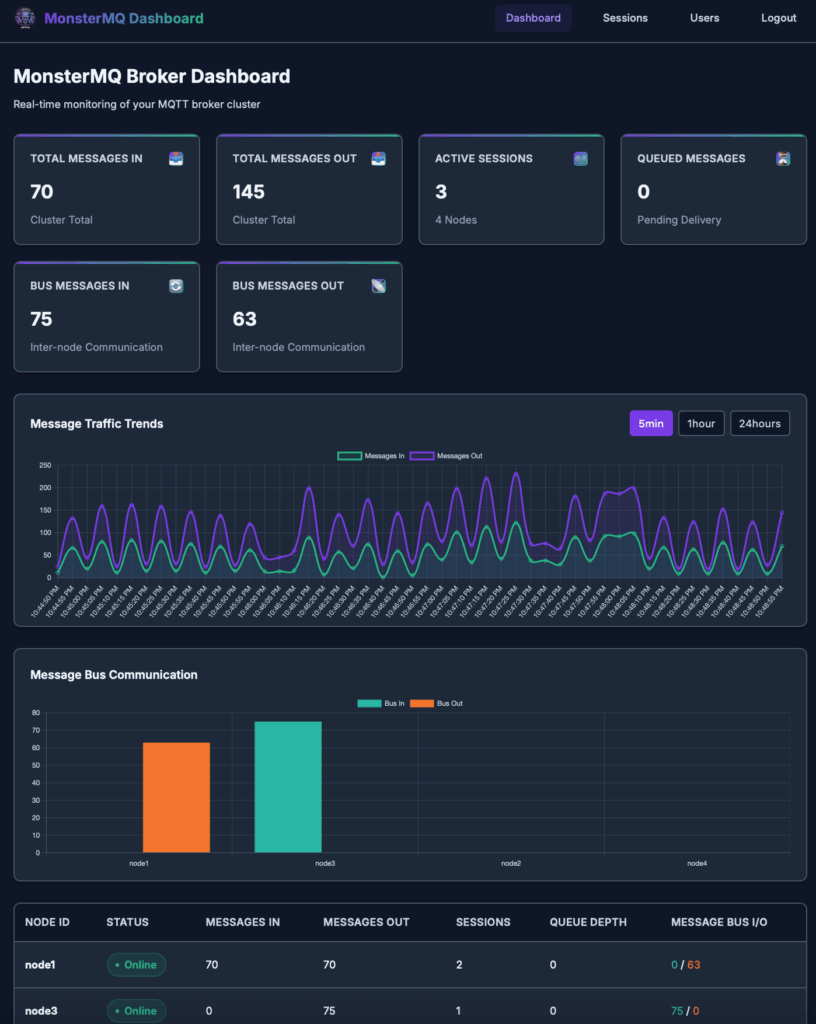

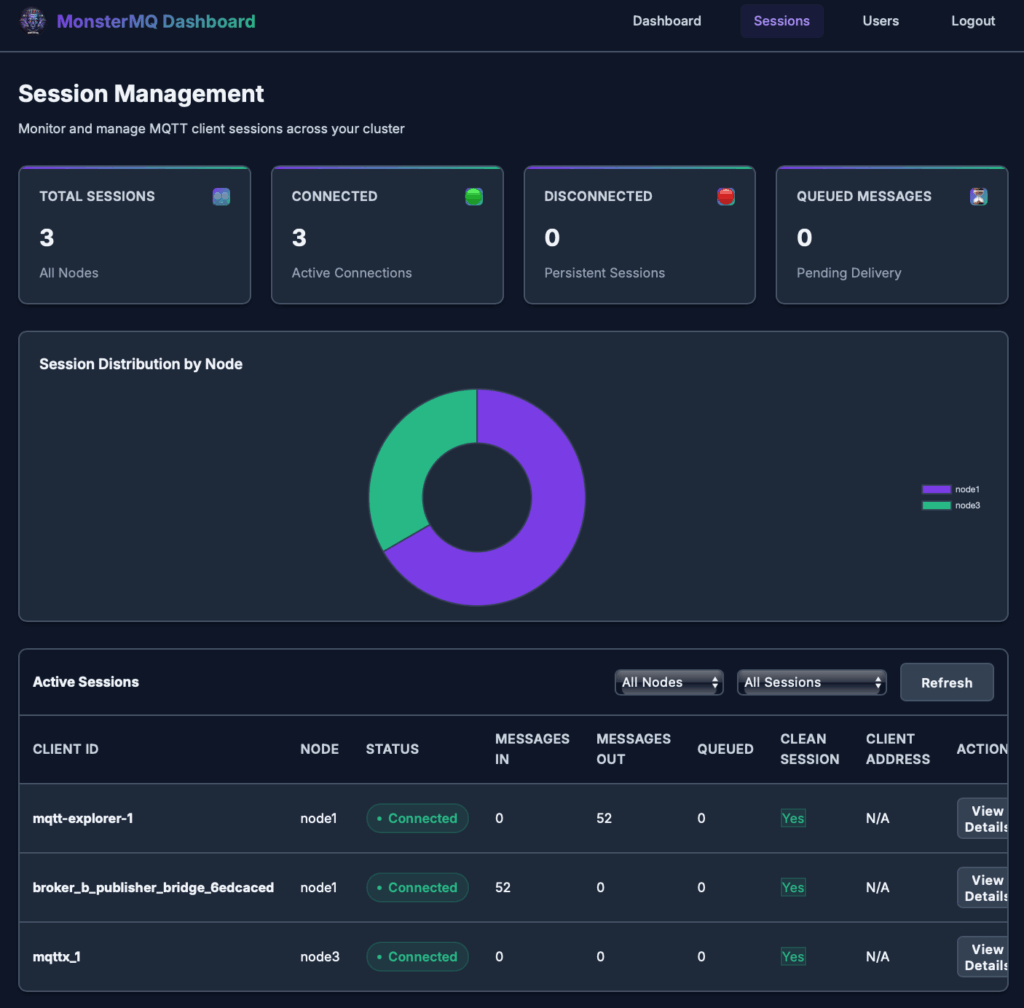

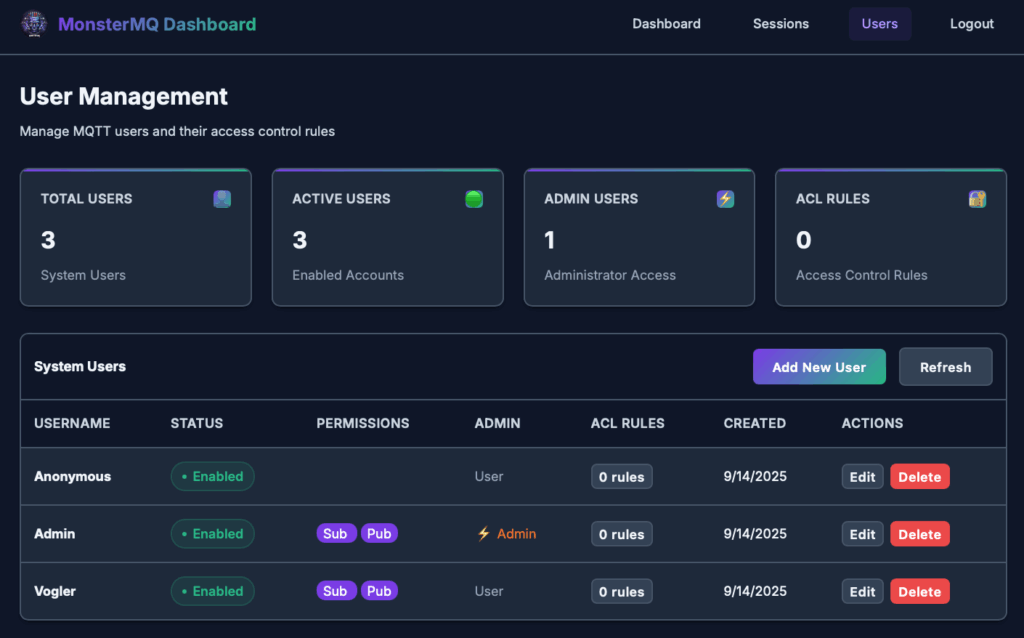

The broker connects to your SCADA systems and brings the data into MonsterMQ. From there, you can use it however you want, for analytics, dashboards, or integration with other systems.

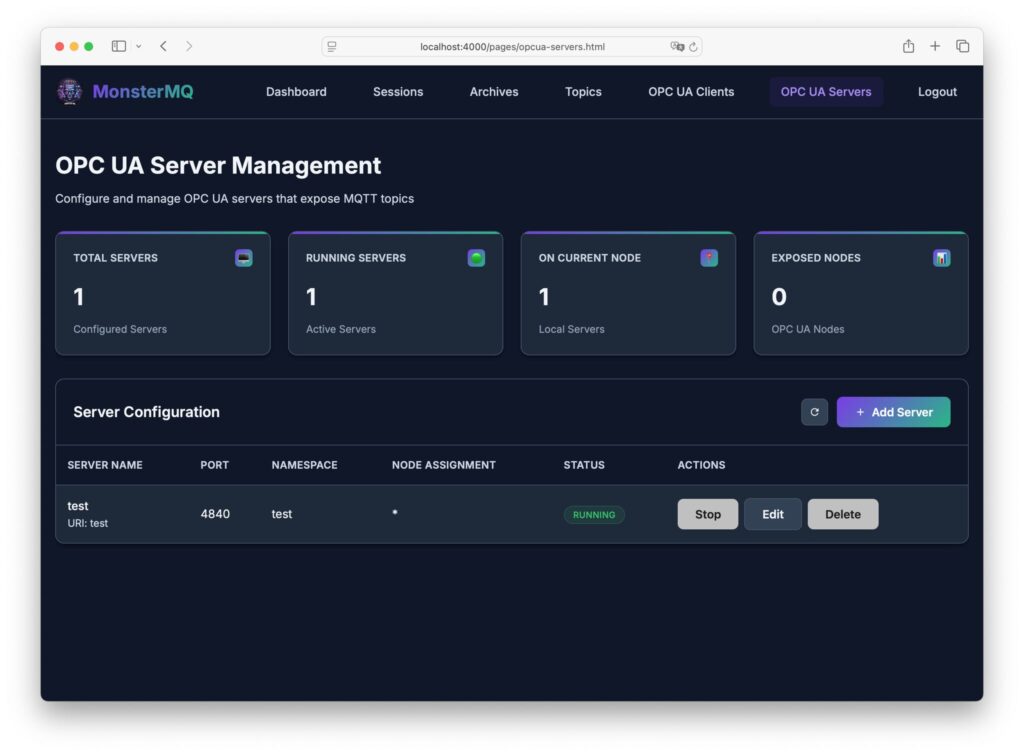

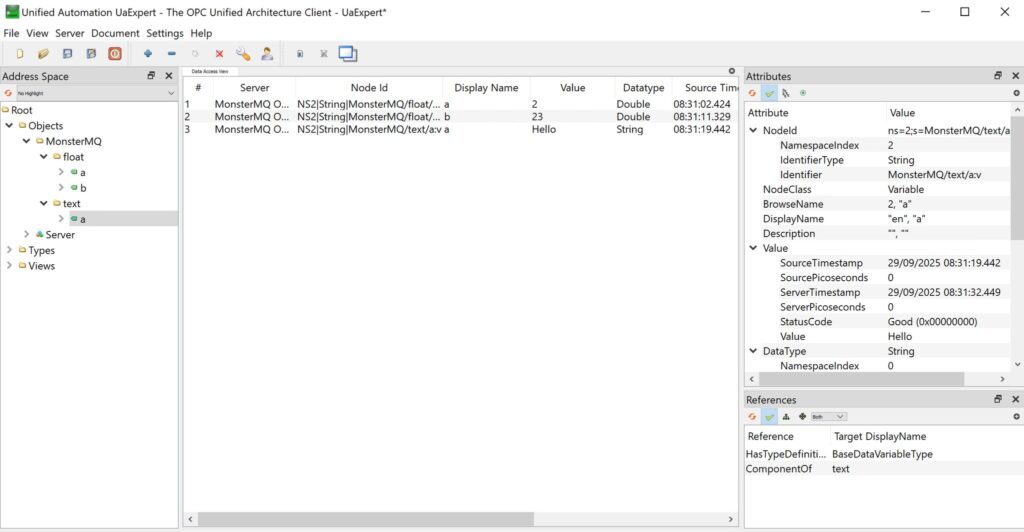

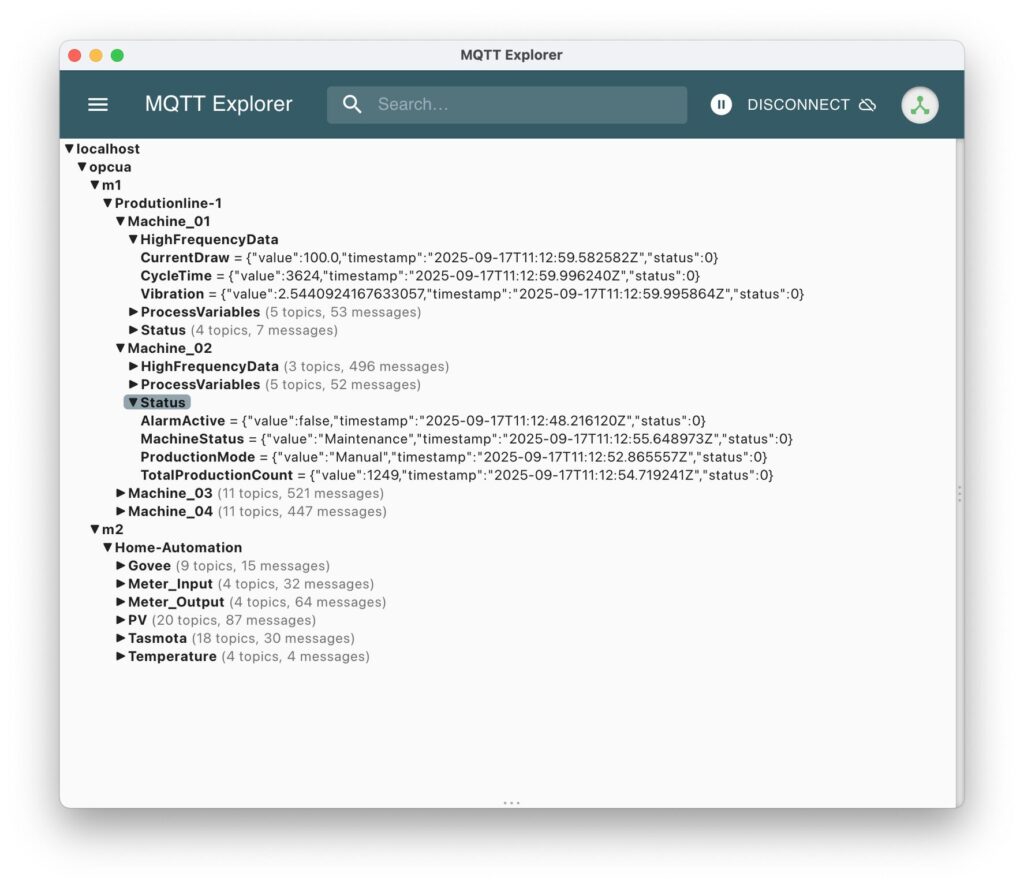

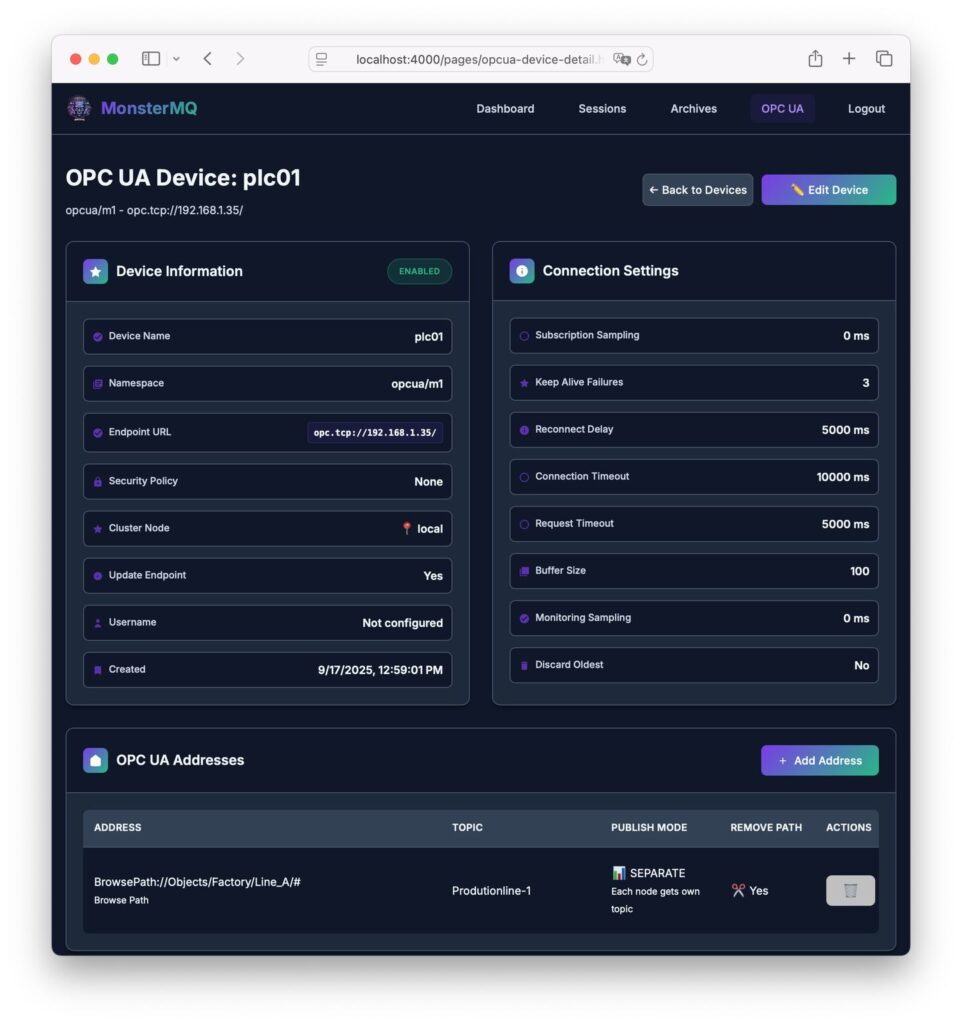

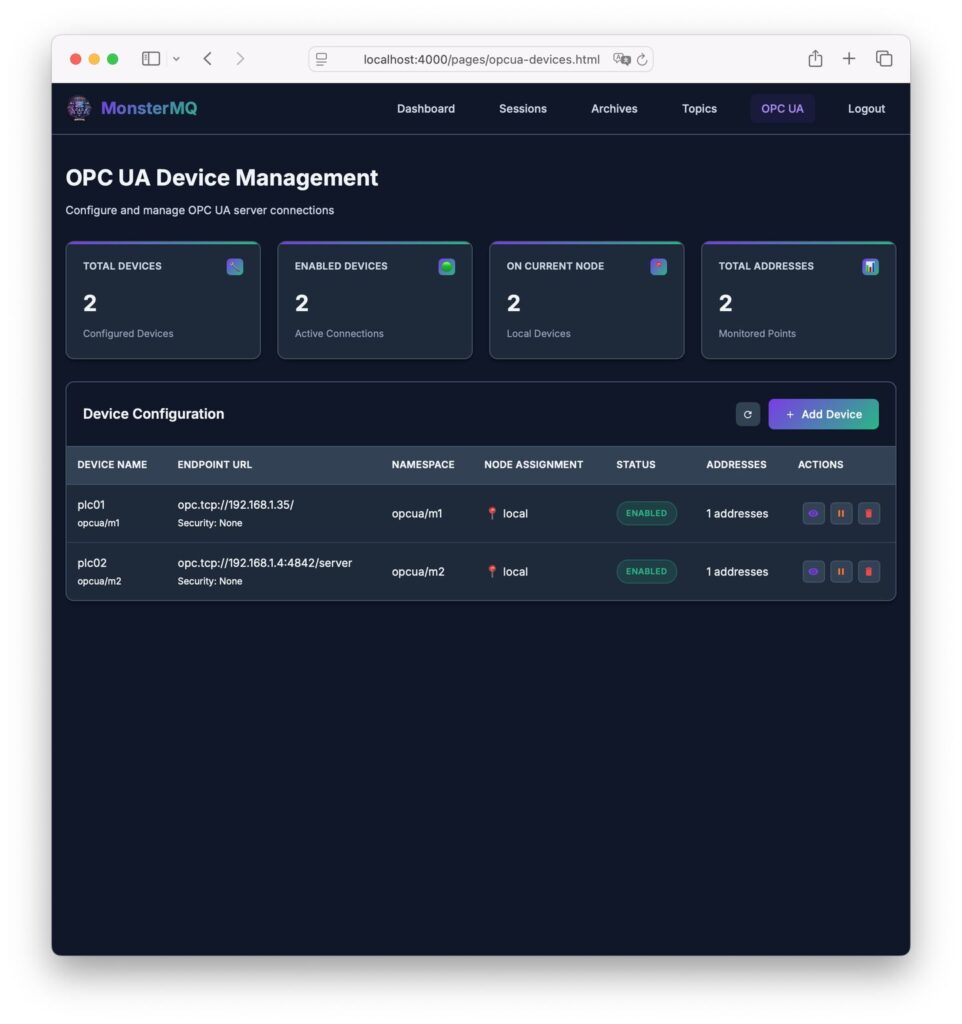

It can also bring OPC UA data natively into the broker, making it a bridge between the OT and IT worlds.

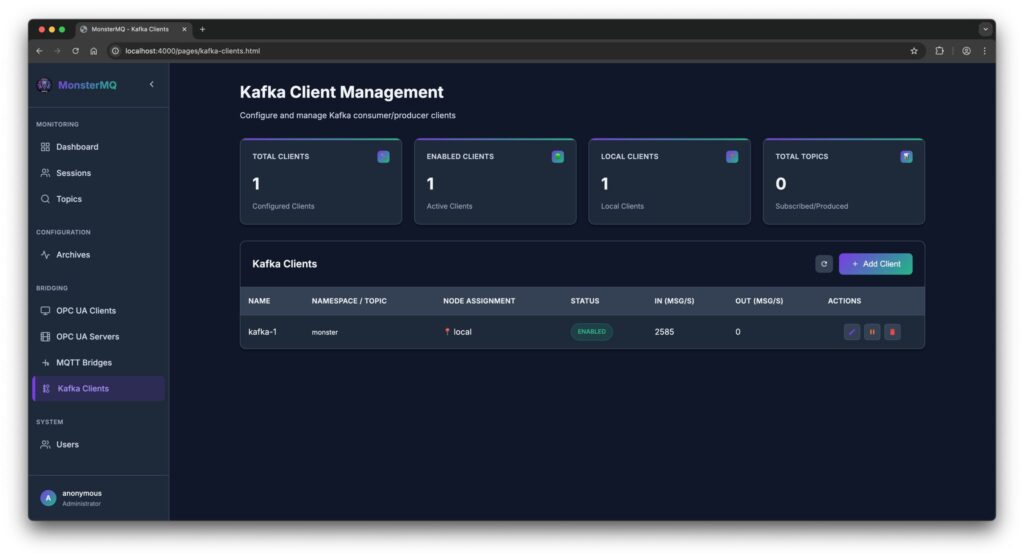

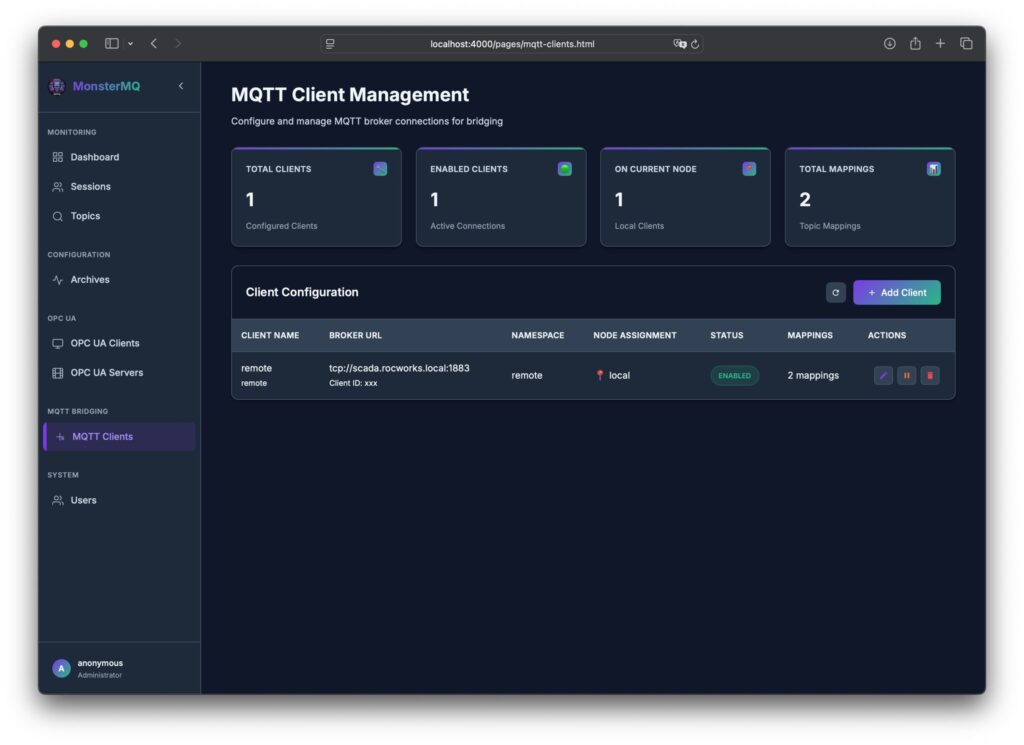

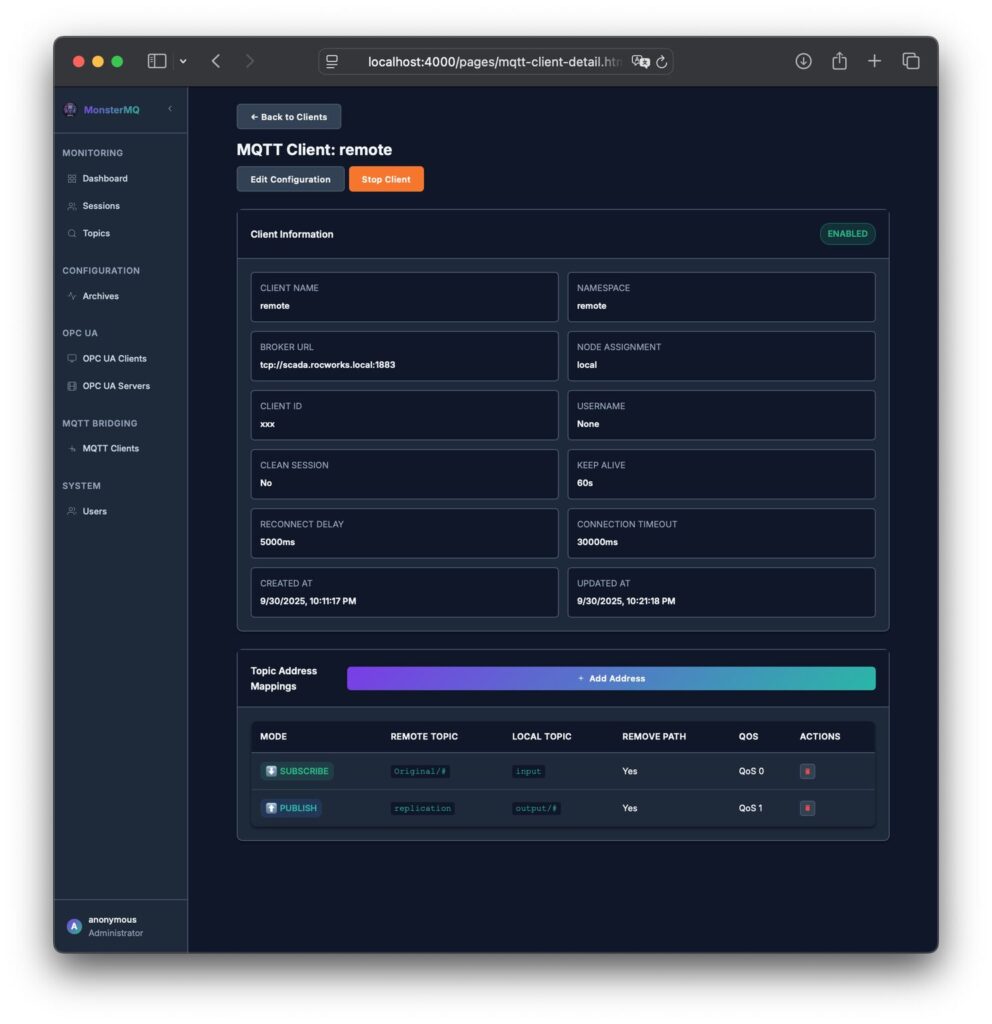

And if you already have an enterprise MQTT broker, MonsterMQ can act as a gateway, forwarding the data to another broker.

Please star MonsterMQ on GitHub and let me know if some tutorials and videos would be helpful. https://lnkd.in/dqPKFCNQ

🔗 MonsterMQ.com